A new study from UC Davis suggests that recommendation algorithms on sites like YouTube and TikTok can play a role in political radicalization. If the algorithm sees that a user is watching a lot of biased political videos, it can trap them in what the researchers call a “loop effect” where the system will continue recommending similarly biased and potentially more extreme content on their homepage and sidebar. Left unchecked, this can lead to polarization and radicalization for both right-wing and left-wing users.

“The system is doing what it’s supposed to be doing, but it has issues that are external to the system,” said Computer Science Ph.D. student Muhammad Haroon, who led the study. “Unless you willingly choose to break out of that loop, all the recommendations on that system will be zeroing on that one particular niche interest that they’ve identified. This can lead to partisanship and increases the divide that is facing American society.”

To quantify bias, the team assigned each video with a score of -1 (far left) to +1 (far right) based on the ratio of left to right-wing accounts that shared the video on Twitter. For example, if users who followed Alexandria Ocasio-Cortez shared a video more often than users who followed Ted Cruz, the video was given a greater negative score. Their initial tests proved the concept, as videos from far-right channels like Breitbart routinely showed scores close to +1 and vice versa.

Next, the team trained sock puppets, artificial entities that act like users while being controlled by the researchers. Each sock puppet was given a series of right- or left-leaning videos to watch every day, and then the team would compare the recommendations on the sock puppet’s homepage to see if its recommended videos gradually became more biased.

“Some notion of radicalization was achieved in these sets of results,” said Haroon. “We found that it’s worse for the right wing, but it’s a left-wing issue as well.”

Algorithm a moving target

Initially, radicalization seemed to be twice as bad for right-wing users, but Haroon says with time and with a few key channels getting suspended or banned, the problem became more or less equivalent on both sides. He also noted that what’s considered far left—progressivism—and far right—largely conspiracy theories—aren’t equal in extremity, and that the algorithm is always changing as new videos are uploaded and deleted every day.

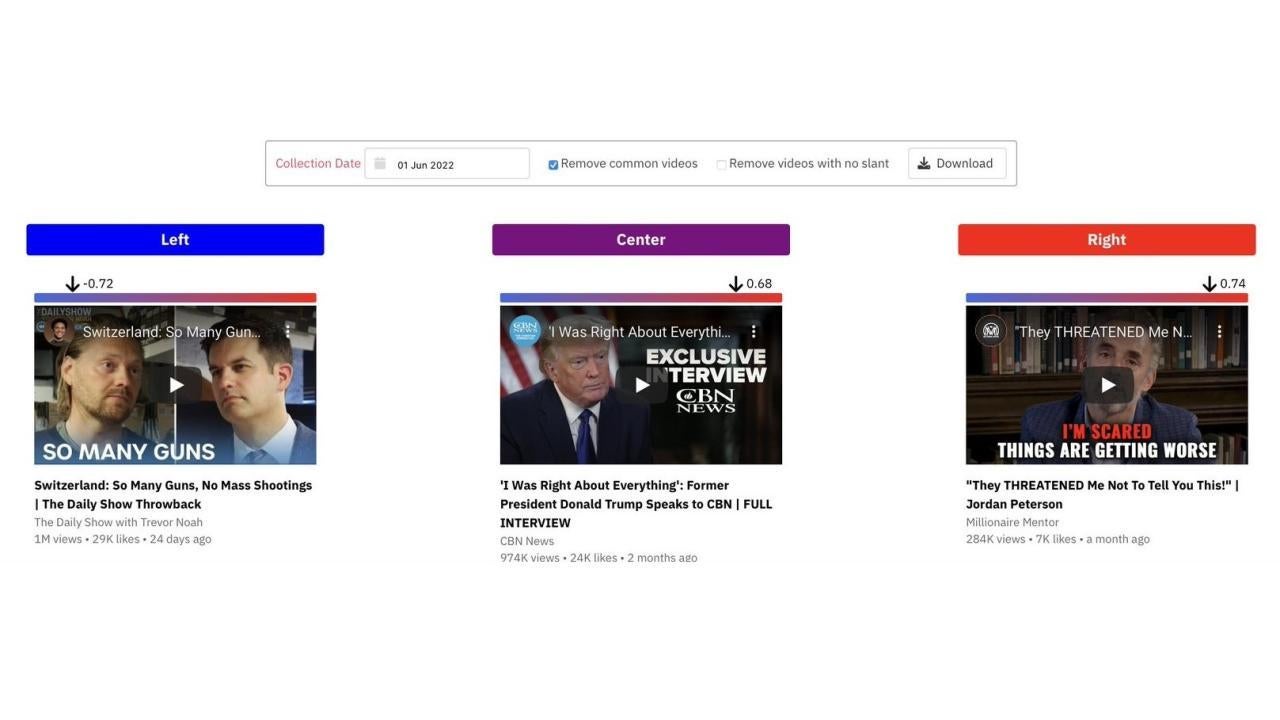

That’s why the team has kept a few sock puppets running over the past several months. They’ve shared their results in a demo site called YouTube Audit, where people can compare the recommended homepage videos of left, center and right-leaning users on any given day. Haroon is especially interested to see if and how the recommendations change during major political events like elections.

“This algorithm is a moving target,” he said. “It’s always going to evolve so hopefully, going forward, we will have this whole snapshot of YouTube’s entire algorithm evolution over time.”

They are also working on solutions to prevent radicalization. One idea developing a system to monitor bias on a user’s homepage and systematically “inoculate” it with unbiased videos. They’re also looking into how non-political content like cooking videos or sermons can still have an inherent political bias and play a role in polarization.

“These systems are all opaque to the end user, who doesn’t really know what data they’re feeding off,” he said. “One of our larger themes is making them more transparent to give users a bit more control over the algorithm and what they’re being recommended.”

Haroon acknowledges that inoculation may make recommendations less relevant, which might not be in companies’ best interests. However, he argues that it would mainly hurt political extremist content creators and disincentivize them from using the platform, which would be a net positive for the world.

“This is a problem that’s bigger than YouTube,” he said. “It’s a problem of how online systems operate and then zeroing in on how a problem persists.”

The study is part of a collaboration between Haroon’s PI, Associate Professor Zubair Shafiq, CS Professors Xin Liu and Prasant Mohapatra and Professor Magdalena Wojcieszak in the Department of Communication that looks at “good AI vs. bad AI”—the ethics and fairness issues associated with online AI systems. Though he comes from a pure technical background, Haroon was drawn to the project because of the chance to tackle social issues as well.

“I was interested in how I can use my knowledge and skills as a computer scientist to make an impact outside the computer science community,” he said.

Media Resources

Noah Pflueger-Peters writes about research at the UC Davis College of Engineering.