Quick Summary

- Complex memories allow us to learn quickly and make inferences about the future

- Project will model complex memories with computers

- Ultimate goal is for machines to learn in the same way as humans

Much of what scientists know about human memory comes from studies involving relatively simple acts of recollection — remembering lists of words or associations between names and faces.

However, they know very little about the brain networks that support memories for complex events, like when we remember the plot of a book or movie or what we experienced, thought and felt during a childhood birthday party.

A multi-university study led by a neuroscientist at the University of California, Davis, aims to vastly deepen understanding by developing a computer model of how the brain forms, stores and retrieves complex memories. The goal is that the model will have humanlike abilities to remember, understand and learn from events.

The project — recently awarded a $7.5 million, five-year grant from the U.S. Department of Defense — could lead to an evolutionary leap in the development of artificial intelligence. It could also open new avenues for understanding Alzheimer’s disease, dementia and other memory disorders.

“Our brains have a remarkable capability to remember past events and to use our memories to make inferences in the present and predictions about the future,” said Charan Ranganath, a professor in the UC Davis Department of Psychology and the Center for Neuroscience who is the project’s principal investigator.

“For instance, if you go out to eat at a formal restaurant, before you even walk in, you can guess the sequence of events that will unfold (seating, taking your order, appetizers... etc.), and the roles that people will play (host, waiter, bus-person, chef, etc.). People can learn this kind of knowledge about particular kinds of events — which we call ‘event schemas’ — after only a few experiences.”

While scientists have identified areas of the brain involved in memory, they know very little about the brain networks involved in creating those schemas, which help us sort events into categories, learn and anticipate what might happen next.

Joining Ranganath in tackling this huge problem are researchers Ken Norman and Uri Hasson of Princeton University, Samuel Gershman of Harvard University, Jeffrey Zacks of Washington University in St. Louis, and Orrin Devinsky of New York University’s Comprehensive Epilepsy Center. Each investigator brings a different kind of expertise needed to map and create a computer model of the brain’s memory networks.

Ranganath said every prong of the study will break new ground for research methods in the field. For instance, the team will develop new tools to help to decode brain activity in real time as a person recalls a past event.

“One of the key ideas in our project is that people intuitively understand and remember events in terms of relationships,” Ranganath said. “Our modeling approach is intended to capture this humanlike approach by extracting knowledge about roles and relationships from specific events.”

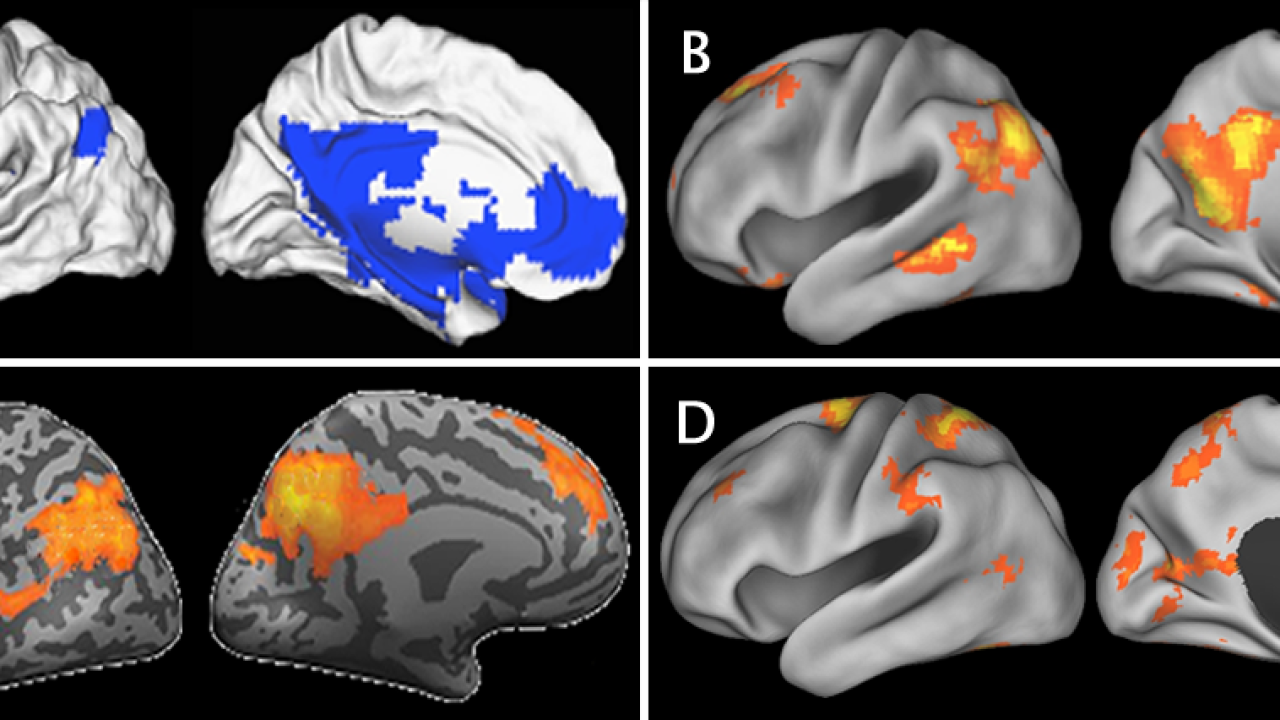

The Harvard and Princeton teams will develop the computer model, and the UC Davis, Washington University, and Princeton scientists will run functional magnetic resonance imaging studies to test and refine the model. The UC Davis team will also analyze recordings of electrical brain activity collected from patients at NYU who have electrodes implanted in the brain as part of a medical evaluation for epilepsy surgery. Ranganath, an expert on the neural mechanisms of human memory, will focus on the interactions between the hippocampus and cortex in retrieving and consolidating memories for real-life events.

Implications for improving memory

Ranganath’s initial studies already have some practical implications for how memory can be improved in the real world. “In our studies, we have people take a tour of the UC Davis California Raptor Center, and after the tour, we use pictures from a wearable camera to test people on what they learned during the tour,” he said. “Our initial results show that using cameras in this way can significantly improve one’s memory. With this project, we will be able to investigate what is happening in the brain as people recall events from the raptor tour.”

People sometimes think of memories like files on a computer, but that’s not the way human memory works, Ranganath said. Instead of simply storing data, the human brain consolidates memories of experiences and perceptions to learn, make inferences, and modify and update knowledge. Ranganath’s team predicts that, when an event is recollected, the memory can be modified and updated. “That dynamic aspect of memory is a little different than computer storage, where a file can be processed without saving anything to memory.”

While the field of artificial intelligence is advancing rapidly, computers and smart devices do best when they are trained to do specific tasks, like recognizing a face, he said.

“It’s much harder to train a computer to learn information that can be used to support a wide range of inferences and predictions. If you have tried to use Siri or voice commands on an Android phone, you’ll soon see that there is a lot that gets lost in translation.”

The computer model envisioned in this project would be more like Star Wars droid R2-D2 — able to analyze, infer and learn from events.

The Department of Defense is excited about this because it could be used for national security purposes. For instance, such a model could be used to analyze video footage for signs of terrorist activity. But the technology could have much wider applications.

“Really, if you think about it, a machine that is capable of learning schemas to understand events would have an endless range of applications,” Ranganath said.

Media Resources

Charan Ranganath, UC Davis Center for Neuroscience, 530-757-8750, cranganath@ucdavis.edu

Andy Fell, UC Davis News and Media Relations, 530-752-4533, ahfell@ucdavis.edu